This week was mostly eaten up by revising a piece of work I had hoped to hand over before I left for Birmingham, though I did have a meeting with an air quality scientist from the University of Birmingham and I am hopeful that the scene has been set for a meaningful art science collaboration to take place.

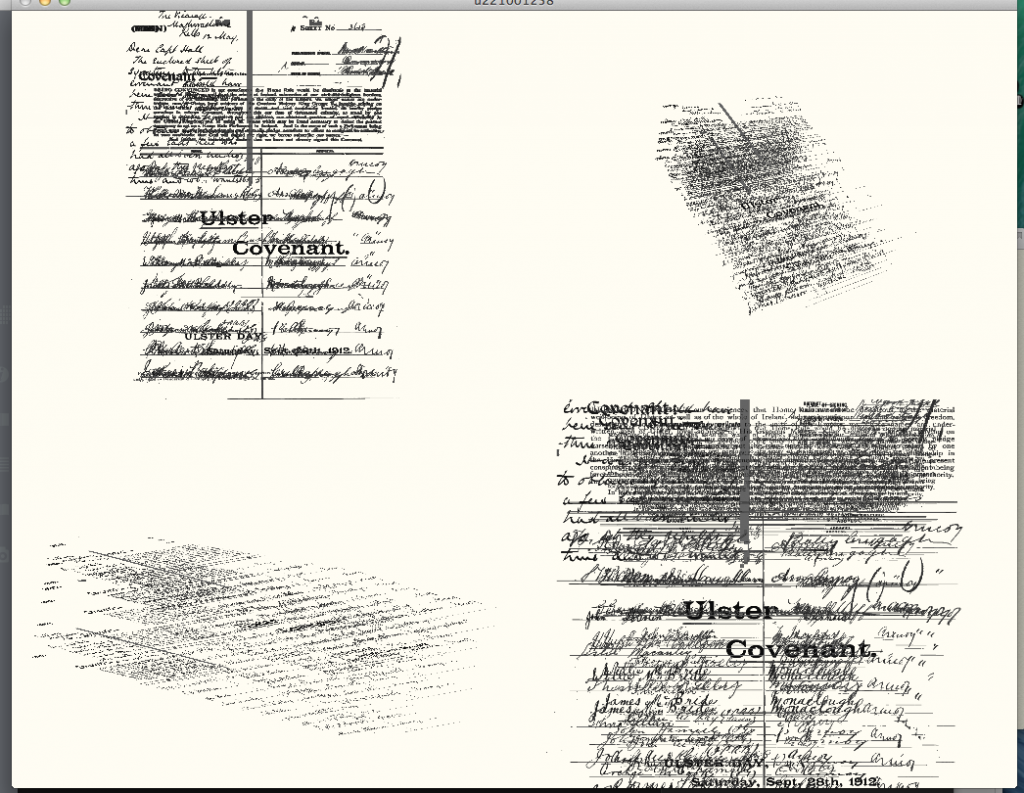

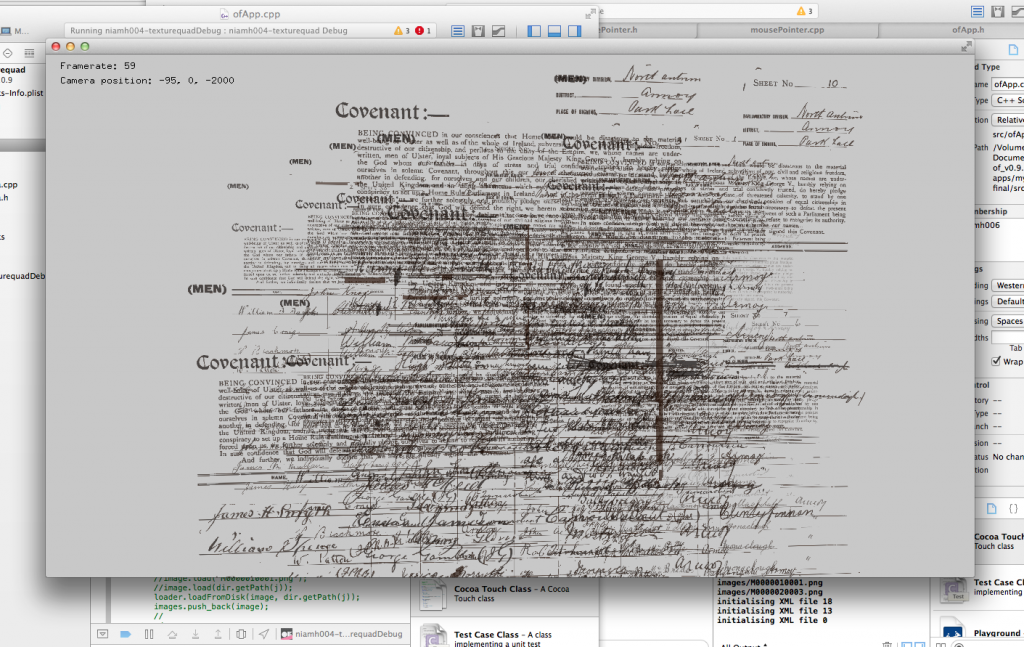

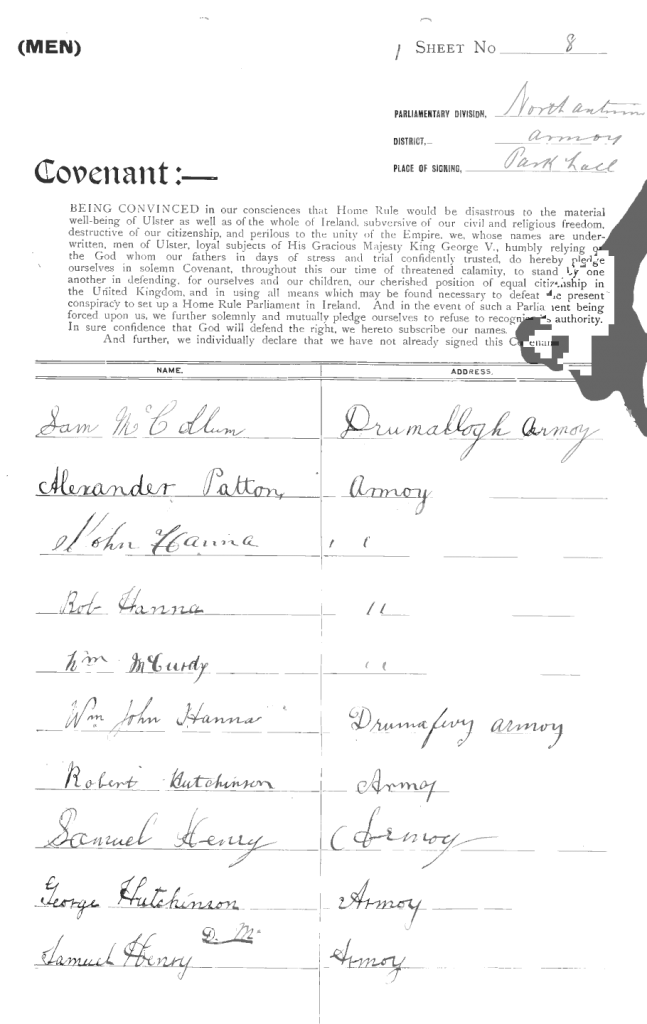

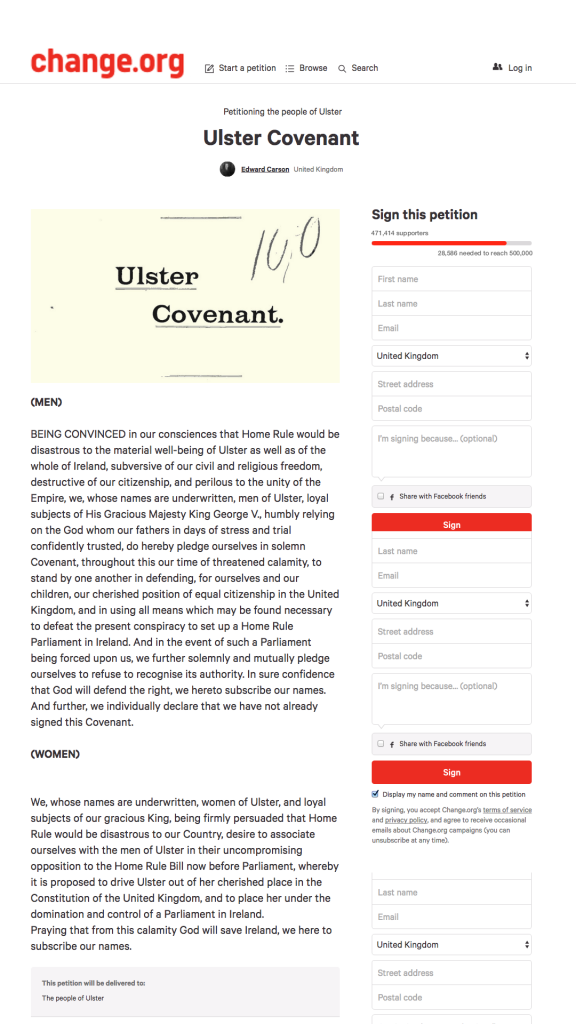

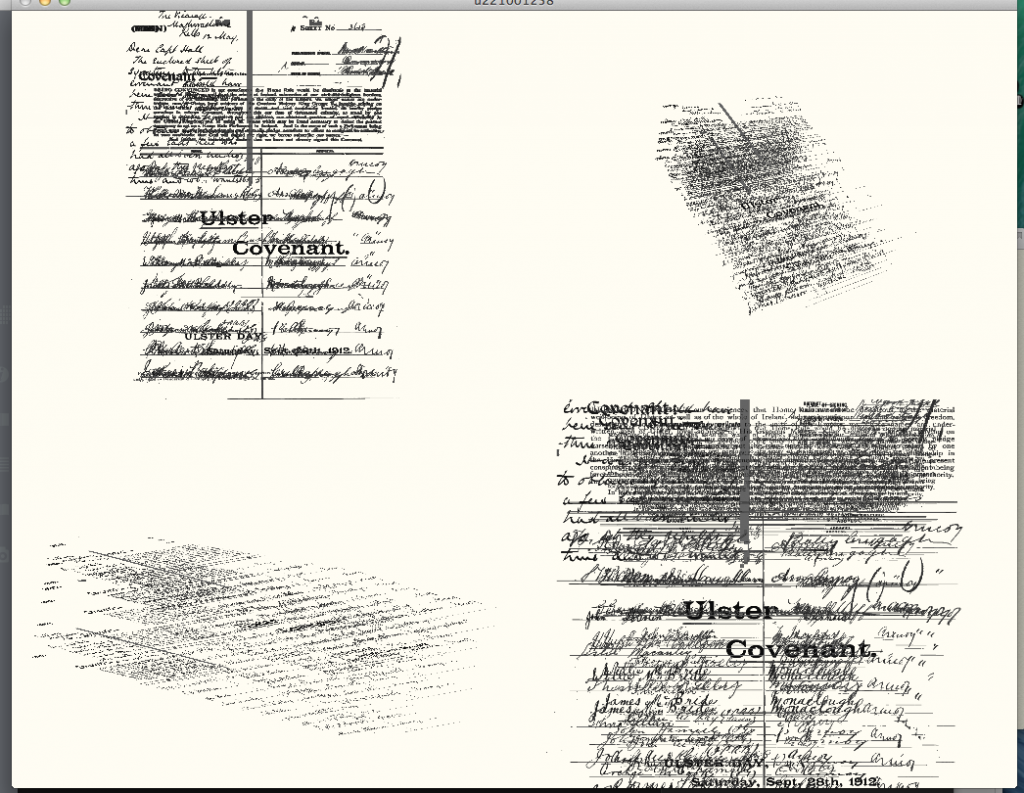

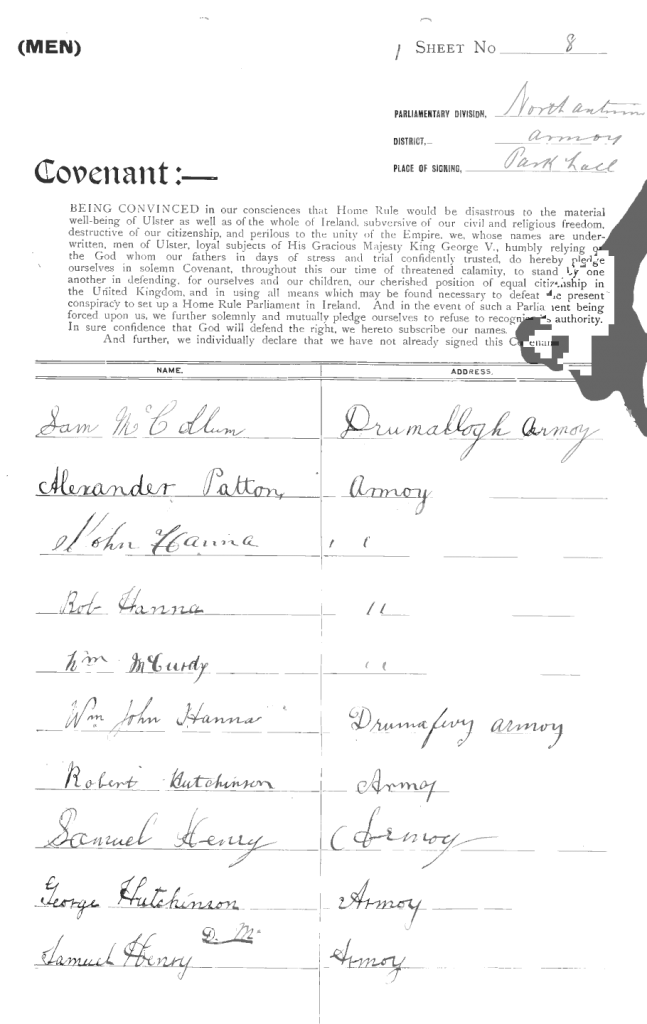

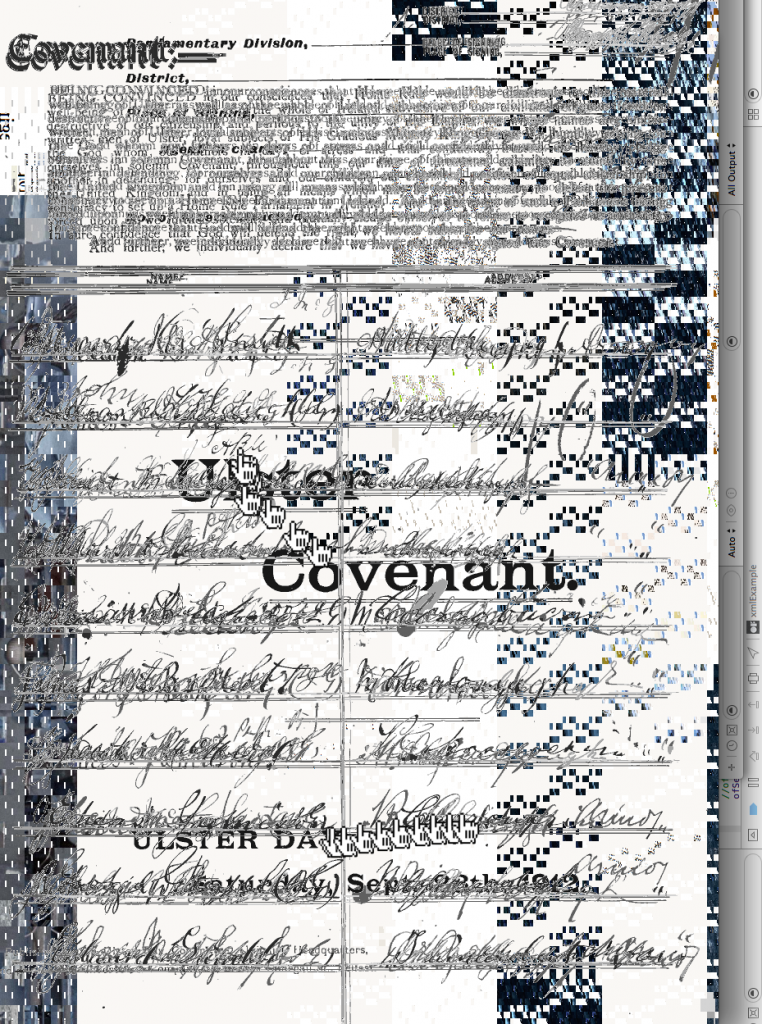

The piece that I spent the week re-work was from Diagramming the Archive and used the PRONI‘s archive of signatures of the Ulster Covenant. The curator’s brief involved layering the images to give a sense of the mass of inscriptions that were collected. Initially I had tried to inject some movement and perspective into this by selecting images at random and layering them in space. I had wanted to do this from four simultaneous camera angles using different projection methods but when I came to port my code to openFrameworks on the raspberry pi 3 the necessary grunt wasn’t there.

In the end I had settled on a single camera angle and selected images at random allowing them to fade in, move across the frame and fade out again.

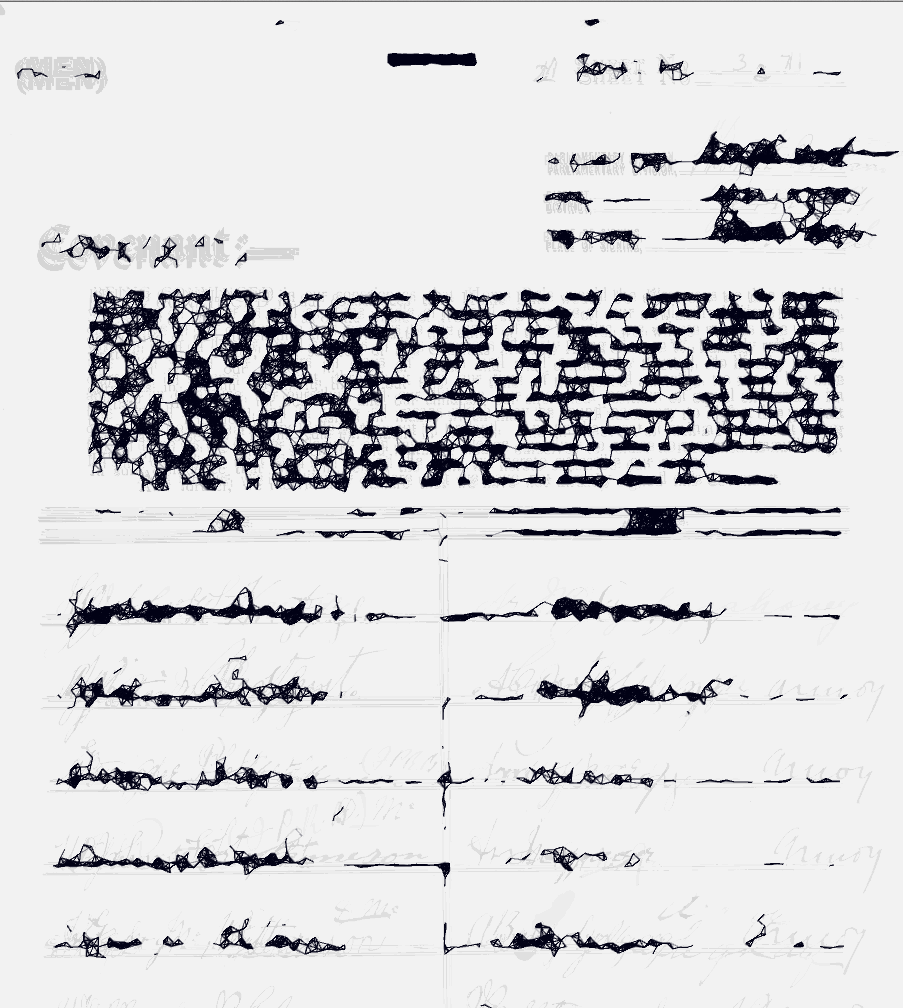

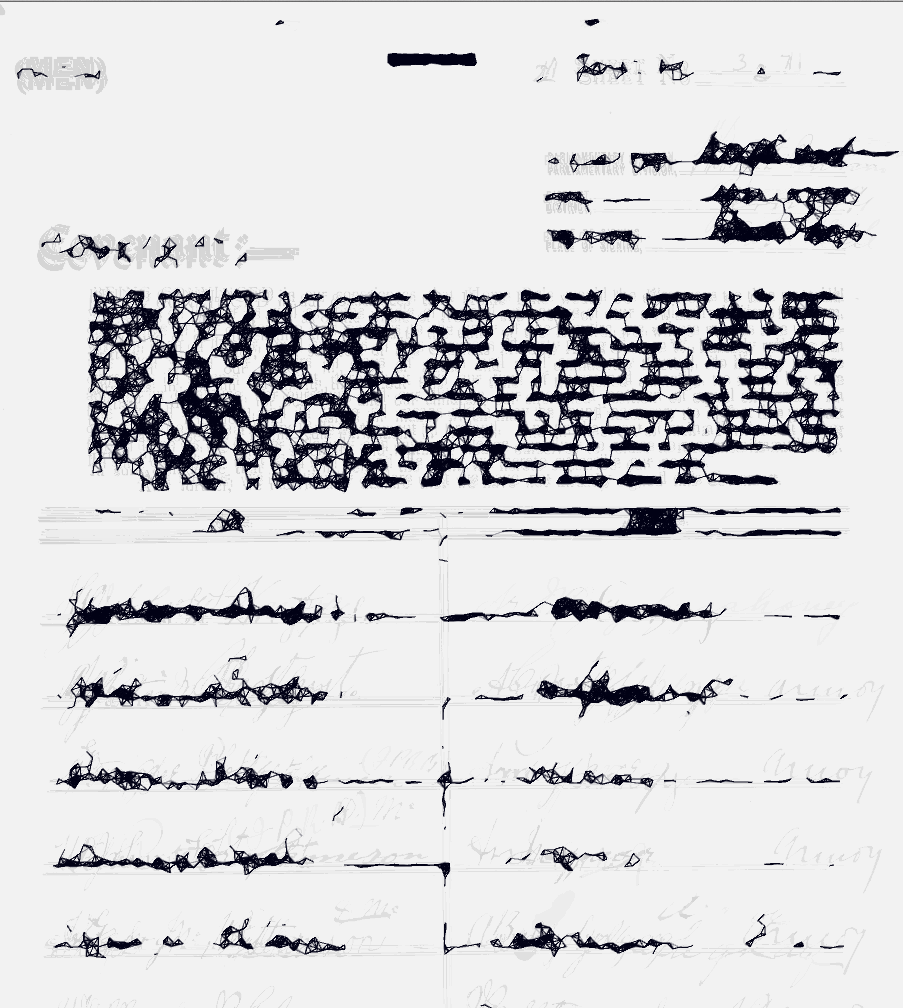

But the effect was all a bit slideshow so I went back and constructed another of other studies. They all involved layering in different ways and looking at the density of inscriptions, one involved taking the dense parts and using that as starting point for geometric drawing algorithms and the others just averaged large sets of images to give a feel of the archive as a whole and would potentially use that as the starting point for something else.

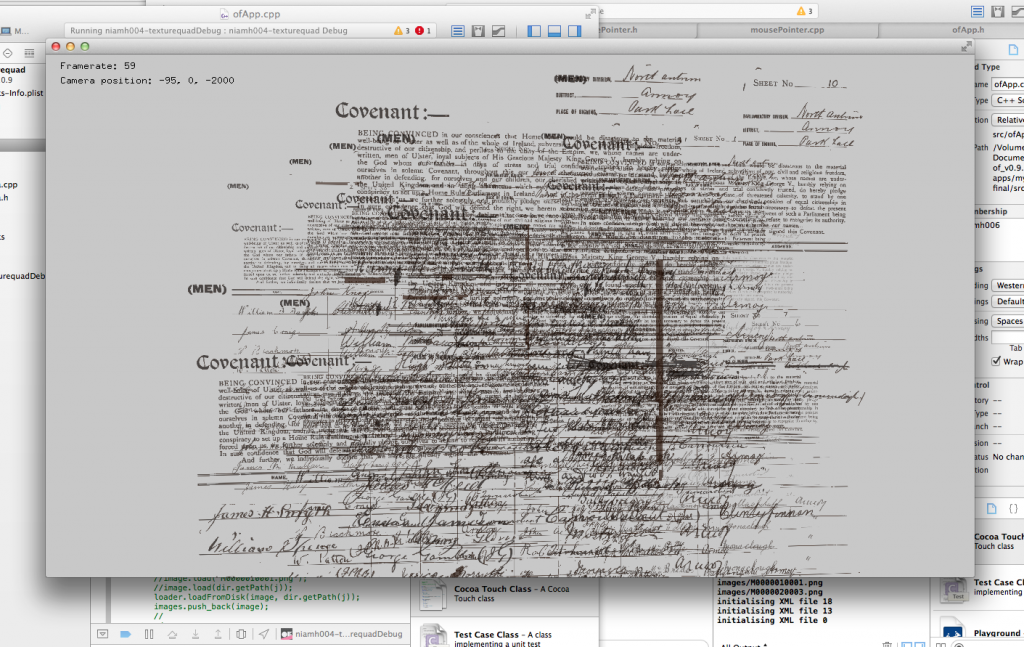

At one point I started drawing images from the archive in to my computer having forgotten to clear the memory from the buffer I was writing to and got some really nice glitch effects going on based on the left over imagery my graphics card had previously been drawing.

Given the archive images I had been supplied with already had a number of glitches within them, presumably artefacts of the scanning and compression processes I decided to base the aesthetic of the work around that, sitting next to Antonio Roberts at BOM Lab probably helped inspire me in this direction.

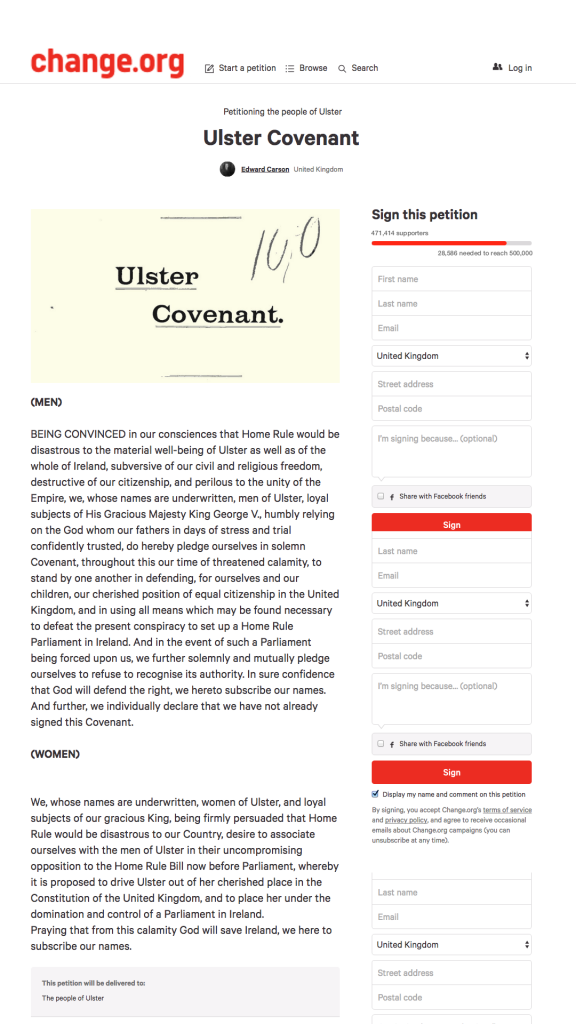

Unfortunately the background buffer would not glitch on the pi in the same way as OS X so I ended up creating the backdrop images on my mac and using them as a backdrop for the layered texts. This made me think that as I now had a free hand to import whatever images I want into the backdrop, not just things that had previously been on my mac’s screen (the first glitches were just copies of the XCode screen before my app started working) I should perhaps think about what imagery would make the most sense in the work. To me the glitch aesthetic immediately situated the work in the present so I started to think about how James Craig might go about galvanising support for his cause if the situation played out again. I created mockups of the Covenant text on modern petition websites and then glitched those to use as the back drops. To further give a sense of this conflation of real past events and how it might be approached in the present I animated lots of mouse pointers scanning across the text as if they were signing it.

The curator had stipulated my piece had to link up wirelessly with Ed & George‘s drawing machine which had been commissioned to create imagery based on the same set of images and we had struggled for a long time to find a meaningful way of linking them that was obvious to an audience but not just inserted purely for its own sake. The recurring problem was that their machine moves very slowly to create its artwork while my screen based work could move at a much faster pace. We also quite liked that contrast between them so slowing mine down to their speed seemed wrong. I had decided to make my pointers, symbolising the people signing the petition, beachball whenever the work selected new images to layer as a way of further playing with the glitch theme and injecting some humour into the work. We thought it would be fun if while my piece beachballed theirs simultaneously paused too, like the whole installation was temporarily brought to a halt under the weight of loading new imagery from the archive.

You can see the work at The Irish Architectural Archive, 45 Merrion Square, Dublin (June 1st – 30th) and at The Linen Hall Library, 17 Donegall Square, Belfast, (September 5th – 30th).

Next week I will be mostly working on recording ambient sounds and air quality data.